Serverless Feels Cheap, Until You Hit the Curve

The Illusion of Cheap Infrastructure

Serverless pricing is one of the most compelling ideas in modern infrastructure.

You only pay for what you use. There is no idle cost. Scaling is automatic. You do not need to think about servers, capacity planning, or infrastructure management.

At low traffic, it feels almost free.

And that is exactly why so many teams choose it early.

It removes friction. It removes decisions. It allows teams to move fast without thinking too much about infrastructure.

But that experience is tied to a specific phase.

What most teams do not realize is that serverless is not inherently cheap.

It is phase-efficient.

And once your system moves beyond that phase, the economics begin to change, quietly at first, then all at once. If you have seen how platforms like Vercel and Cloudflare behave under real production load, the pattern is consistent with where serverless breaks in practice.

The Cost Curve Nobody Models

Most comparisons between serverless and traditional backend are framed incorrectly.

They focus on:

- developer experience

- scalability

- operational complexity

All of those matter.

But the real difference is not technical.

It is temporal.

It is about how cost behaves over time.

| Stage | Serverless | Traditional Backend |

|---|---|---|

| Early (low traffic) | Extremely cheap | Relatively expensive |

| Growth | Increasing cost | Stabilizing |

| Scale | Expensive | More efficient |

Serverless is front-loaded efficiency.

Traditional backend is back-loaded efficiency.

The crossover point is where most teams get surprised, because they never modeled it in the first place.

What Actually Drives Serverless Cost

To understand the curve, you need to look beyond “pay per request” and break down what you are actually paying for.

Invocation Cost

Every request has a cost.

At low volume, this is negligible. At scale, it becomes dominant.

The difference between one million and one hundred million requests is not just a technical difference.

It is a financial one.

Execution Duration

Serverless charges for time, not just execution.

A slightly inefficient function:

- extra database call

- redundant parsing

- unnecessary logic

Individually, these are small.

At scale, they multiply millions of times.

This is where cost starts to drift away from expectation.

Cold Starts and Hidden Overhead

Cold starts are often treated as a performance issue.

But they are also a cost issue.

They increase execution time, introduce retries, and create unpredictable behavior under load.

You are not just paying for your logic.

You are paying for everything around it.

Data Movement (The Quiet Multiplier)

This is one of the most underestimated cost drivers.

Serverless architectures tend to be:

- more distributed

- more event-driven

- more dependent on external services

Which means:

- more network calls

- more data transfer

- more cross-service communication

And none of that is free.

Why Traditional Backend Becomes Cheaper

Traditional backend looks inefficient early because you are paying for unused capacity.

Servers run even when idle. Containers sit waiting. Resources are provisioned ahead of demand.

But at scale, this model flips.

You start amortizing cost across consistent traffic.

Instead of paying per request, you are paying for sustained throughput.

And that changes everything.

A Real Scenario (Where Things Shift)

Consider a SaaS API with:

- ~50 million requests per month

- moderate business logic

- database interaction

- some external API calls

At this stage, both models technically work.

But economically, they behave very differently.

| Stage | Serverless | Backend |

|---|---|---|

| 1M requests | Very cheap | Over-provisioned |

| 10M requests | Comparable | Comparable |

| 50M+ | Increasing fast | Stabilizing |

The important part is not the numbers.

It is the direction.

Serverless continues scaling linearly with usage.

Backend tends to plateau once capacity is optimized.

The Most Dangerous Phase: The Middle

The biggest mistake does not happen early.

And it does not happen at massive scale.

It happens in the middle.

At this stage:

- traffic is meaningful

- cost is rising

- but not alarming enough to trigger change

So teams stay.

They delay optimization. They postpone migration. They accept gradual increases.

Until one day, cost is no longer gradual.

And by that point, the system is already deeply coupled to the architecture. This is also why most SaaS teams overpay for infrastructure without realizing it, the expensive decisions were made months before the bill arrived.

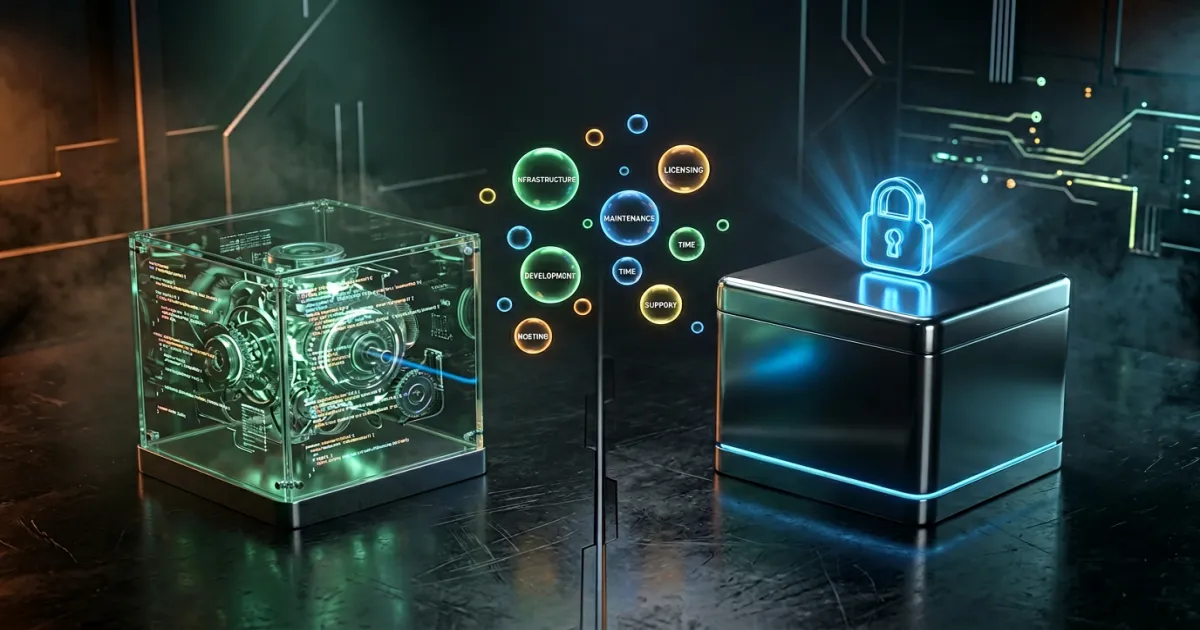

The Lock-In Nobody Talks About

Serverless does not just create vendor lock-in.

It creates architectural lock-in.

Your system becomes:

- event-driven

- stateless (sometimes artificially)

- tightly coupled to provider primitives

Moving away is no longer a simple migration.

It is a redesign.

And redesigns are expensive, not just in infrastructure, but in time, coordination, and risk. The same lock-in challenge applies to multi-cloud vs single vendor decisions, where the hidden cost is not the technology itself but the architectural dependency it creates.

Why Teams Miscalculate This

There are a few consistent patterns behind misjudgment.

- Cost is modeled linearly, without accounting for scale effects

- Execution inefficiency is ignored because it feels small

- Data transfer is underestimated or overlooked

- Decisions are optimized for speed, not long-term economics

None of these are mistakes individually.

Together, they create blind spots.

Where Serverless Is Actually the Right Choice

Serverless is extremely effective in specific conditions:

- unpredictable or bursty traffic

- early-stage products

- low operational capacity

- event-driven workloads

In these scenarios, speed and flexibility matter more than cost optimization.

And serverless delivers that exceptionally well.

Where Traditional Backend Wins

As systems mature, priorities shift.

Backend becomes more efficient when:

- traffic is stable

- request volume is high

- workloads are predictable

- cost becomes a constraint

At that point, efficiency matters more than abstraction.

What Experienced Teams Actually Do

In practice, most experienced teams converge to a hybrid model.

They do not choose one or the other.

They combine both.

- serverless for bursty, async, edge workloads

- backend for core APIs and steady traffic

This allows them to keep flexibility where it matters, while controlling cost where it compounds.

It is not a beginner-friendly setup.

But it is very common in real systems.

The Real Trade-Off

This decision is not about which technology is better.

It is about when and how you pay.

| Approach | What You Optimize | What You Accept |

|---|---|---|

| Serverless | Speed & simplicity | Cost volatility |

| Backend | Efficiency | Operational overhead |

| Hybrid | Balance | Architectural complexity |

There is no free path.

Only trade-offs that become clearer over time.

Final Takeaway

Serverless is not a mistake.

Using it without understanding the cost curve is.

Most teams do not abandon serverless because it is bad.

They abandon it because they outgrow its economics.

And by the time they realize that, the system is already shaped around it.

The best teams do not ask:

“Is serverless good or bad?”

They ask:

“At what point does it stop being the right tool?”