The Opus 4.7 Tokenizer Tax: Why Anthropic's "Unchanged Pricing" Just Quietly Raised Your AI Bill 35%

By Framesta Fernando · Engineering Manager & Technical Architect · 14 min read · Published April 16, 2026

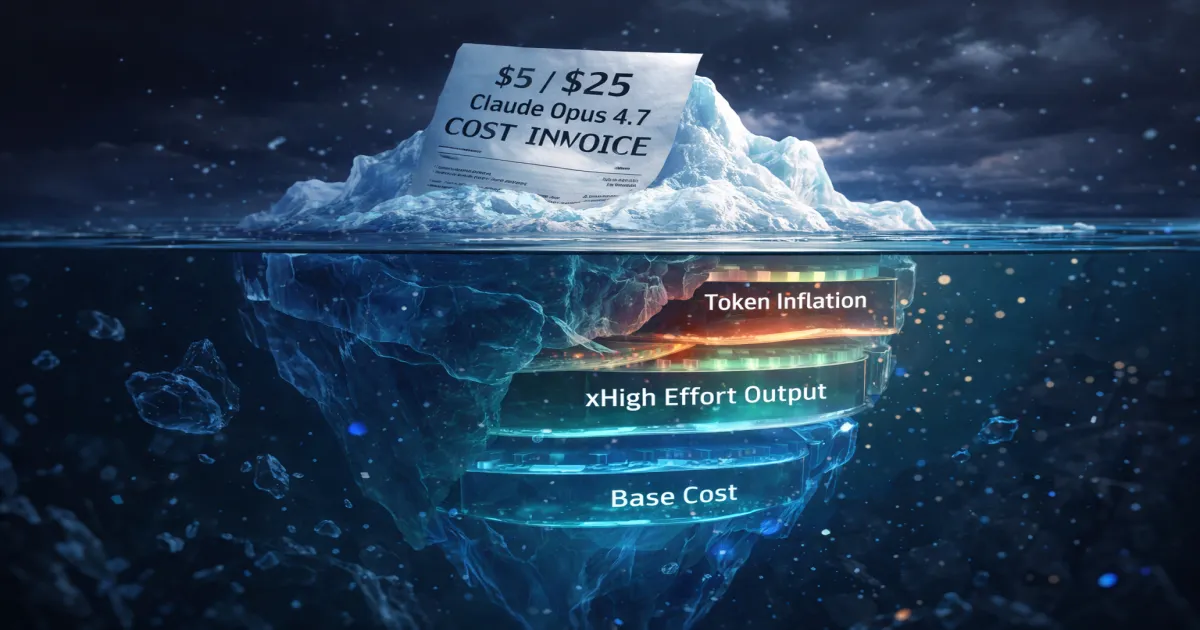

TL;DR: Claude Opus 4.7 launched today at the same $5 per million input tokens and $25 per million output tokens as Opus 4.6. But a new tokenizer inflates the same English input by up to 1.35x, and the new

xhighdefault makes the model think more before responding. Most teams will see their Opus bill rise 35-50% next month for identical workloads. The sticker price did not change. The Claude Opus 4.7 cost did.

Anthropic shipped Opus 4.7 this morning with the headline "pricing remains unchanged." Your CFO read that. Your monthly bill will not agree.

The Pricing Page Said One Thing. The Invoice Will Say Another

Pricing pages are the easiest part of vendor due diligence to verify. You open the docs, you find a number per million tokens, you multiply by your projected usage, you put it in a spreadsheet. For two years, that has been roughly how engineering teams evaluated frontier model migrations. Anthropic dropped Opus 4.7 today with a press line every founder and engineering manager noticed: "$5 per million input tokens, $25 per million output tokens, unchanged from Opus 4.6." The implication felt obvious. Same price, more capability, free upgrade.

That implication is exactly what makes this release expensive.

What Anthropic shipped is not a free upgrade. It is a model that costs the same per token but spends more tokens per task, often by a lot. The launch communication is technically correct in the same way that a tax bracket is technically correct. The unit price did not move. The unit count did. Every platform team that flips claude-opus-4-7 into production this week will discover the difference somewhere between day 14 and day 21 of next month, when their finance dashboard finally catches up.

This article is not an Opus 4.7 hype piece, and it is not anti-Anthropic. The model is genuinely better. What it is, is the thing that should have been on the slide deck and was not.

The Mental Model That Just Broke

The mental model most teams use for LLM cost is straightforward and, at this point, deeply outdated. Cost equals requests times tokens times unit price. When the unit price is held constant, the cost line is held constant. This is the same model the pricing page reinforces, the same one your finance team uses to forecast OpEx, and the same one every executive summary leaves intact when announcing a migration.

The flaw is that "tokens" is not a stable unit across model versions. Tokenizers change. Effort levels change. Self-verification behavior changes. Each of these inflates the token count for the same logical request, and once you multiply that inflation by your monthly volume, the unit-price-stable bill becomes a fiction.

The unit price held. The unit definition shifted underneath it.

For Opus 4.7, three things shifted at once: a new tokenizer that produces more tokens for the same English text, a new default xhigh effort level that produces more thinking tokens, and a self-verification pass that consumes additional output tokens before the model commits an answer. Each is a quality improvement. Together they are an invoice surprise.

A Concrete Example: One Migration, $39,500 Per Month Surprise

Take a team running a customer support copilot on Opus 4.6. Their workload: 8 million inference requests per month. Average input: 1,200 tokens (system prompt, customer message, retrieved context). Average output: 350 tokens (response with light reasoning). That is roughly 9.6 billion input tokens and 2.8 billion output tokens per month.

On Opus 4.6, the math was simple. Input cost: 9,600 × $5 = $48,000. Output cost: 2,800 × $25 = $70,000. Total: $118,000 per month before prompt caching savings.

Switch to Opus 4.7 with no other changes, no migration work, just claude-opus-4-6 to claude-opus-4-7 in the model parameter. Anthropic's migration guide states the new tokenizer maps the same English content to "roughly 1.0 to 1.35x" tokens. Independent migration tests cluster around 1.25x for English prose, closer to 1.35x for code, JSON, and Korean text.

Apply 1.25x conservatively to input. The same 1,200-token prompt now meters at 1,500 tokens. Monthly input climbs from 9.6B to 12B tokens. Cost: 12,000 × $5 = $60,000. Output is more nuanced. The new xhigh default and adaptive thinking consume more output tokens on agentic and multi-step tasks. Per Anthropic's own internal coding eval, Opus 4.7 produces "more output tokens" at higher effort levels, with the net being favorable on cost-per-resolved-task but unfavorable on cost-per-request. For our copilot workload, output tokens climb roughly 1.4x to 3.9B. Cost: 3,900 × $25 = $97,500.

New total: $157,500 per month. Same workload, same business outcome metric, $39,500 higher. That is a 33.5% increase, with no observable change in product behavior to justify it to anyone above the engineering manager level.

Where the "Same Price" Intuition Breaks

The "same price" intuition does not survive contact with what Anthropic shipped. The breakdown happens in five places at once.

- The tokenizer change is silent and unmeasurable from outside. Your billing dashboard reports tokens and dollars. It does not report "tokens per equivalent semantic unit." A 35% increase in token count for the same payload looks indistinguishable from your product getting more popular.

- The xhigh effort default is recommended for "coding and agentic use cases," which describes 70% of production Opus traffic. Teams that do not explicitly downgrade to

mediumwill inherit higher token spend on every request. - Self-verification consumes output tokens before the model returns. The improvement is real, but on a per-request basis you are paying for the verification step whether or not your task needed it.

- Adaptive thinking display defaults to "omitted," which means streaming UIs now show a long pause before any text appears. The fix,

display: "summarized", restores progress at the cost of more output tokens streamed back to your client. budget_tokensis deprecated in favor oftask_budget. Code that still passes the old parameter is accepted for now but will silently behave differently, leaving you without the cost ceiling you thought was in place.

Each of these, in isolation, is a 5-15% effect. Stacked, they routinely cross 40% on real workloads modeled in the last 24 hours.

How the Tokenizer Tax Hits at Each Stage

Cost behavior diverges sharply by stage. The headline 1.25-1.35x tokenizer inflation hits everyone, but everything downstream depends on workload shape, traffic volume, and how much of your stack already locked in to Opus-specific defaults.

Early stage (under $5K/month on Opus)

A seed-stage team running a single AI feature, maybe 200K to 500K requests per month. The bill jumps from about $1,800 to $2,400. Annoying, not material. The risk here is psychological, not financial. The team treats this as the new baseline, never investigates the cause, and locks in a habit of accepting silent vendor-side cost shifts as a cost of doing business.

The right move at this stage is the boring one. Switch to claude-opus-4-7 because the quality improvement on coding and vision is real, downgrade effort to medium for non-critical paths, and ignore the rounding error on the bill. Just write down what you saw, because at the next stage the same mechanism multiplies.

Growth stage ($20K-$80K/month on Opus)

A Series A or B team where Opus is the workhorse for one or two production features. This is the danger zone. The base bill was already a line item that finance asked about quarterly. The 33% jump from our copilot example puts a $50K/month workload at $66K and forces an unexpected conversation with the CFO about why "no architectural changes happened" but the bill still moved.

This is also the stage where the second-order effects show up. Cursor users on the team start defaulting to Opus 4.7 for their personal coding flow because Cursor surfaces it as the new recommended model. Per-developer inference cost on the Cursor side rises in lockstep. The same dynamic we covered in the developer ROI illusion between Cursor and Windsurf plays out, but on the model side rather than the editor side. The validation tax does not disappear, it just relocates.

The right move: instrument token counting on every Opus request, build a per-feature cost dashboard before migrating, and route only the requests that genuinely benefit from 4.7's capabilities. Everything else stays on Opus 4.6 or downshifts to Sonnet 4.6.

Scale stage ($150K+/month on Opus)

A late-stage company with Opus deeply embedded in agentic workflows, multi-tool orchestration, and high-stakes inference. Here the math gets ugly fast. A $200K base inference bill becomes $266K-$320K depending on workload mix. Annual run rate jumps by $800K-$1.4M for the same product behavior.

Worse, scale-stage teams typically have multiple internal consumers of Opus: the product agent, the internal dev copilot, the support summarizer, the data analyst. Each one inherits the tokenizer change. Each one might or might not have engineers paying attention to effort settings. The aggregate drift is invisible because no single team sees the full picture, which is structurally identical to the handoff problem in multi-agent orchestration. Every team's local optimization ignores the shared cost surface.

This is where smart teams pause migration entirely until they can model the change per workload, route aggressively between Opus 4.6, Opus 4.7, and Sonnet 4.6, and renegotiate enterprise pricing with Anthropic with the new token math in hand. The brute-force migrate-everything approach turns into a six-figure surprise.

The Hidden Cost Layers Nobody Budgets For

The token count increase is the visible part. The invisible part is where the actual margin damage happens, and most teams will not see it until invoice cycle two.

| Cost Component | Opus 4.6 (baseline) | Opus 4.7 (same workload) | Real Driver |

|---|---|---|---|

| Input token spend | $48,000 | $60,000 | Tokenizer inflation 1.25x |

| Output token spend | $70,000 | $97,500 | xhigh effort plus self-verification |

| Streaming UX latency cost | ~$0 | ~$1,200 | "omitted" thinking display causes perceived hangs |

| Failed request retries | ~$2,000 | ~$2,800 | Stricter instruction following breaks loose prompts |

| Prompt audit engineering time | $0 | $4,000-$8,000 (one-time) | Re-tuning prompts that worked on 4.6 |

| Cache hit rate degradation | High | Lower (first 30 days) | New tokenizer invalidates existing cache |

| Total monthly delta | baseline | +$30K to +$45K (recurring) | Compound |

The line that nobody budgets for is cache invalidation. Anthropic's prompt caching gives up to 90% discount on cache hits ($0.50 per million tokens versus $5 standard input). On Opus 4.6, mature teams ran 60-80% cache hit rates on system prompts and tool definitions. On migration to 4.7, that cache is cold. For the first two to four weeks, you pay full input price on prompts that previously cost a tenth as much. By the time the cache rebuilds, you have already paid an extra $10K-$30K in transitional inference, depending on volume.

This pattern is identical to the one we mapped out in why AI cost explodes after scale. The invoice grows with system evolution, not user growth. A model swap is system evolution. The cost reflects it whether or not anyone modeled the transition.

Migration is not a configuration change. It is a re-pricing event your CFO has not been warned about.

The Tokenizer Is the New Pricing Page

Here is the part most engineering leaders are not internalizing fast enough. The pricing page is no longer the source of truth for cost. The tokenizer is.

For three years, the LLM market trained everyone to read pricing in dollars per million tokens. That number was the contract. Vendors competed on it, blog posts compared it, internal cost models projected against it. The implicit assumption was that "a token" is a stable, vendor-neutral unit, like a kilowatt-hour. That assumption was always slightly false. With Opus 4.7, it is now visibly false in a way that materially affects production budgets.

Tokenizers are versioned, vendor-controlled, and silent. Anthropic, OpenAI, Google, and every self-hosted Llama deployment use different tokenizers. Within each vendor, different model versions can use different tokenizers. The same English sentence costs a different number of tokens on each one. The same JSON payload costs different numbers. The same code block, the same Korean paragraph, the same screenshot OCR result, all different. None of this is anywhere on a pricing page.

What this means in practice is that "cost per million tokens" is approximately as informative as "cost per cubic foot of fuel" without specifying whether the fuel is gasoline, diesel, or hydrogen. The unit is the same word. The energy density is wildly different.

| What Teams Track | What Actually Drives the Bill | Implication |

|---|---|---|

| Dollars per million tokens | Tokens per logical request | Pricing page is necessary but insufficient |

| Request count | Tokens per request × tokenizer version | Migrations re-price every existing request |

| Output token count | Effort level × thinking budget | Defaults shift cost without user action |

| Cache hit rate | Tokenizer stability across model versions | Model upgrades reset cache, costing weeks of overhead |

| Per-vendor unit price | Cost per business outcome (resolved ticket, compiled function, deployed PR) | The only metric that survives across vendors |

The teams that survive the next five years of frontier model upgrades will be the ones that stop tracking cost in dollars per million tokens and start tracking cost per resolved business unit. Cost per resolved support ticket. Cost per merged PR. Cost per deployed agent run. Those metrics are tokenizer-invariant. The pricing page metric is not.

The vendor controls the tokenizer. You control the workload. Track the thing you control.

A Real Cost-Per-Outcome Formula for Opus 4.7

The fix for the tokenizer tax is not better forecasting against the pricing page. It is a different formula. Stop modeling cost as price times tokens. Start modeling it as outcome cost, and let the vendor's tokenizer become an internal variable, not a contract.

Effective Cost Per Outcome = (T_in * P_in * M_t) + (T_out * P_out * M_e * M_v)

--------------------------------------------------

R_oWhere:

- T_in = average input tokens per logical request, measured on the previous tokenizer version

- P_in = vendor input price per token

- M_t = tokenizer multiplier (1.0 to 1.35 for Opus 4.6 to 4.7)

- T_out = average output tokens per logical request, measured on the previous effort setting

- P_out = vendor output price per token

- M_e = effort level multiplier (medium to high to xhigh, roughly 1.0, 1.3, 1.7 on output volume)

- M_v = self-verification multiplier (1.0 if disabled, ~1.15 if enabled)

- R_o = resolution rate (the percentage of requests that actually succeeded at the business outcome)

The point of the formula is not the exact numbers, which vary per workload. It is forcing every cost discussion to include the multipliers nobody currently sees on the pricing page.

| Variable | What Increases It | What You Can Control |

|---|---|---|

| M_t (tokenizer) | Vendor model version upgrade | Choose when to migrate, batch the cache rebuild cost |

| M_e (effort) | xhigh defaults, complex agentic tasks | Route low-complexity requests to medium effort |

| M_v (verification) | Self-checking model behavior | Disable for low-stakes paths |

| R_o (resolution) | Better model quality | This is the only multiplier that lowers cost per outcome |

The trap is treating Opus 4.7 as a pure replacement for 4.6. The reality is that for cost-per-outcome to improve, the resolution rate gain (R_o) has to outpace the combined inflation from M_t, M_e, and M_v. For coding-heavy and agentic workloads, Anthropic's data shows it does. For straightforward prompt-response chat, it often does not.

Migrate Now, Stage Later, or Stay on 4.6

Most teams will frame this as a binary, ship today or hold. The real choice has four options, and the right one depends on workload mix.

| Decision | What You Gain | What You Pay | When It Breaks |

|---|---|---|---|

| Migrate everything to Opus 4.7 today | Best capability across coding, vision, agentic tasks | 35-50% bill increase month one, cache rebuild cost, prompt re-audit | Workloads that were cost-sensitive on 4.6 become unsustainable on 4.7 |

| Stage migration by workload class | Capture quality gains where they matter, contain cost on the rest | Engineering time to instrument and route, 4-6 week migration window | Routing logic becomes its own maintenance burden, covered in smart routing across model tiers |

| Stay on Opus 4.6 for now | Predictable cost, stable cache, no prompt re-tuning | Falling behind on coding and vision benchmarks; 4.6 will be deprecated within 12-18 months | The first deprecation notice arrives and forces a rushed migration anyway |

| Downshift to Sonnet 4.6 where possible | 40% lower input cost, 40% lower output cost than Opus 4.7 | Quality regression on hardest tasks; only viable for medium-complexity workloads | Reasoning-heavy work degrades silently |

The honest answer for most growth-stage and scale-stage teams is the second option: stage migration by workload class, gated by per-feature cost-per-outcome measurement. The first option is the most common choice and the most expensive one. The third is a delay tactic that becomes the second option six months later, with all the same work but compressed into less time.

What to Do at Your Stage

The tokenizer tax does not affect every team equally, and the right response is not the same across the board.

Early stage (under $5K/month on Opus)

Migrate to Opus 4.7 today, downgrade effort to medium for everything except your hardest coding paths, and treat the bill increase as a learning exercise. Build a one-line cost-per-outcome metric now, even if the absolute numbers are tiny. The discipline matters more than the dollars at this stage.

Growth stage ($20K-$80K/month on Opus)

Hold migration for two weeks. Use that time to instrument token counting per request, build a per-feature cost dashboard, and identify which workloads genuinely benefit from 4.7's capability gains. Then migrate one feature at a time, measuring cost-per-outcome before and after. Expect 2-3 features to win and 1-2 to be moved back to 4.6 or down to Sonnet 4.6. This is also the right moment to revisit the trade-offs we covered in OpenAI vs self-hosted LLM cost, because the tokenizer change makes self-hosted Llama on a fixed-cost GPU look meaningfully better for routine traffic.

Scale stage ($150K+/month on Opus)

Treat this as a renegotiation event, not a migration. Anthropic enterprise sales teams are aware of the tokenizer math and have flexibility. Bring measured token-inflation data from your actual workloads, not estimates from blog posts. Negotiate either a tokenizer-adjusted unit price or a workload-specific pricing tier. In parallel, build the routing infrastructure described in smart routing and self-hosted AI cost savings so that you can shift traffic between Opus 4.7, Opus 4.6, Sonnet 4.6, and self-hosted models based on real-time cost-per-outcome signals.

The mistake at every stage is the same: assuming the migration is free because the per-token price did not change.

Two Mistakes Already Visible Today

Two mistakes are already showing up in production decks I have reviewed this morning.

The first is the "drop-in upgrade" framing. Engineering leads are pitching the migration to leadership as "same cost, better quality" because that is what the announcement said. When the bill arrives 35% higher, the credibility hit lands on the engineer who pitched it, not on Anthropic's marketing copy. Anyone presenting Opus 4.7 as a free upgrade is signing up for a difficult Q3 review.

The second is silent reliance on xhigh becoming the default for coding and agentic use cases. Anthropic recommends it. Most teams will not override it. The result is that the cost increase is largest exactly where Opus is most heavily used, and nobody made an explicit decision to spend that money. As we covered in the broader AI cost explosion analysis, agentic usage is the silent killer of margins, and xhigh is the new accelerant.

Reading the Invoice You Did Not Sign

Anthropic shipped a better model today. The marketing said the price did not change. Both statements are true. Neither statement is the whole truth.

The whole truth is that frontier model pricing has entered a new regime. The unit price is now a marketing artifact. The actual cost of inference depends on a tokenizer you do not control, an effort level you may not have configured, a self-verification step you cannot disable, and a cache that just got invalidated. The pricing page is the easy part. The math behind it is the hard part, and Anthropic has made the math more complicated without changing the page.

The teams that will do well in this regime are the ones that stop quoting "$5 per million input tokens" in their cost models and start quoting "cost per resolved support ticket" or "cost per merged PR" instead. Vendors will keep changing tokenizers. Vendors will keep introducing new effort defaults. Vendors will keep adding self-verification, multi-step thinking, and adaptive reasoning that pushes token counts up. None of that is a problem if you measure outcomes. All of it is a problem if you measure tokens.

The pricing page is the contract. The tokenizer is the bill.

If you are migrating to Opus 4.7 this week, do it with eyes open. If your CFO asks why the bill went up, do not pretend it was traffic. It was not.

FAQ

Q: Did Anthropic raise the price of Claude Opus 4.7?

No, the per-token price is identical to Opus 4.6 at $5 per million input tokens and $25 per million output tokens. However, Opus 4.7 ships with a new tokenizer that maps the same English content to roughly 1.0 to 1.35x more tokens, and a new xhigh default effort level that produces more output tokens on coding and agentic workloads. Real bills typically rise 30-50% on identical workloads despite the unchanged unit price.

Q: How much will my Opus bill go up after migrating to 4.7?

Expect a 25-35% increase on input token spend due to the tokenizer change, and a 20-40% increase on output token spend if you keep the new xhigh default for coding and agentic tasks. Workloads heavy in code, JSON, or non-English languages tend to see the larger end of the range. Plain English chat workloads see closer to 25-30% total increase. The first month also includes one-time cache rebuild cost as the new tokenizer invalidates existing prompt caches.

Q: Should I migrate to Opus 4.7 immediately?

For workloads where capability gains matter (complex coding, agentic orchestration, vision-heavy tasks), yes, but stage the migration by workload class rather than swapping all traffic at once. For workloads where Opus 4.6 was already meeting quality bars (chat, summarization, classification), there is no urgency. Anthropic typically supports prior model versions for 12-18 months, giving you time to migrate based on cost-per-outcome data rather than launch-day excitement.

Q: What is the xhigh effort level in Opus 4.7?

xhigh is a new effort setting introduced in Opus 4.7 that sits between high and max. Anthropic recommends it as the starting point for coding and agentic use cases. It increases the model's internal thinking budget, which improves task resolution rates but also produces more output tokens per request. Teams that do not explicitly set effort to medium or high will inherit xhigh and the higher token spend that comes with it.

Q: Does prompt caching still work on Opus 4.7?

Yes, prompt caching offers the same up-to-90% discount on cache hits ($0.50 per million tokens versus $5 standard input). However, the new tokenizer means cached prompts from Opus 4.6 do not transfer. The first 2-4 weeks after migration run with significantly reduced cache hit rates, costing 5-15% extra in transitional inference until the cache rebuilds against the new tokenizer.

Q: Is Opus 4.7 worth it compared to Sonnet 4.6 for cost-sensitive workloads?

For most cost-sensitive workloads, Sonnet 4.6 at $3 per million input tokens and $15 per million output tokens remains the better choice. Opus 4.7's capability gains are concentrated in the hardest 15-20% of tasks: complex multi-file refactoring, long-running agentic workflows, and high-resolution vision. For routine inference, Sonnet 4.6 is roughly 60% cheaper on input and 40% cheaper on output, with quality that is competitive on tasks below the Opus complexity threshold.

Q: What is the budget_tokens deprecation in Opus 4.7?

Opus 4.7 deprecates the budget_tokens parameter in favor of a new task_budget mechanism. Code that still passes budget_tokens is accepted for backward compatibility, but the behavior may differ from previous expectations. Teams relying on budget_tokens as a cost ceiling should migrate to task_budget or risk losing the spending guardrails they thought were in place.

Next Read

If you are now thinking about how to actually route traffic between Opus 4.7, Opus 4.6, and Sonnet 4.6 to keep costs predictable, our breakdown of smart routing and self-hosted AI cost savings shows the routing patterns smart teams use to cut total inference cost 60-80% without losing quality.

Sources & Further Reading

- Anthropic: Introducing Claude Opus 4.7

- Claude Docs: What's new in Opus 4.7

- Claude API Pricing Reference

- Help Net Security: Claude Opus 4.7 release analysis

- Dev.to: Opus 4.7 migration test, budget_tokens deprecation

Last updated: April 16, 2026