Most AI Systems Don’t Fail at Intelligence

Most AI systems do not fail because the model is not good enough. They fail because the system around the model cannot execute reliably in production. The output might be correct, but the system that depends on it is not consistent enough to turn that output into real outcomes.

This is the same pattern we have seen before in infrastructure decisions. Early systems look simple and promising, but complexity accumulates in places that were never modeled properly. If you have read about why AI cost explodes after scale, the root cause is not usage alone, but how systems evolve under real conditions. The exact same principle applies here, but instead of cost, the failure shows up in execution.

AI is no longer limited by what it can generate. It is limited by what it can execute reliably.

The Stack Everyone Uses And Why It Works (At First)

Most teams today build AI systems using a familiar stack that looks clean and modular. Typically, it consists of an API layer, an orchestration layer, and an application layer. This structure is intuitive and aligns with how modern SaaS systems are built.

At early stage, this works extremely well. The system behaves predictably, much like serverless platforms before they hit real limits. If you have explored where serverless breaks, the pattern is identical. Simplicity holds until the system starts doing more than it was originally designed for.

The problem is that this stack assumes execution is trivial. It assumes that once the model produces an output, the rest of the system can handle it deterministically. That assumption is where things start to break.

The Missing Layer Most Engineers Ignore

The traditional AI stack is missing a critical component:

👉 The execution layer

This is the part responsible for turning model output into real-world actions. It handles:

- tool execution and API calls

- state management across steps

- retries and failure handling

- side effects such as database writes or external operations

Without this layer, AI remains a thinking system, not a doing system. This is also why many systems feel complete in demos but fragile in production.

This is not very different from classic SaaS architecture decisions. If you have evaluated build vs buy trade-offs, you already know that what looks simple at the surface often hides complexity underneath. The execution layer is exactly that hidden complexity in AI systems.

Where Things Start Breaking

As systems evolve, the gap between “response” and “execution” becomes more visible. What used to be a simple request-response flow turns into a chain of dependent operations.

The system begins to require:

- multi-step reasoning instead of single responses

- integration with multiple tools and services

- persistent state across interactions

- retry logic and fallback mechanisms

- coordination between different system components

Each of these is manageable on its own. Together, they create a system that is no longer simple to reason about.

This is also why many teams start overpaying infrastructure. Complexity increases gradually, but cost and fragility increase non-linearly.

OpenClaw Enters at the Execution Layer

OpenClaw feels different because it operates exactly where traditional stacks are weakest. Instead of focusing on generating better outputs, it focuses on executing tasks within a system.

This changes the role of AI entirely. Instead of being a component, it becomes part of a runtime that manages actions, tools, and workflows.

In practice, this means:

- actions are treated as first-class operations

- tool execution is integrated into the core loop

- workflows are managed as systems, not chains

- execution logic is centralized instead of scattered

This is why OpenClaw often feels like a leap forward. It reduces the amount of glue code required and makes complex workflows feel more natural.

This is similar to the shift seen in infrastructure decisions like multi-cloud vs single vendor, where flexibility increases but so does complexity.

Why It Feels So Powerful (And Why That’s Misleading)

The initial experience with OpenClaw is often impressive. Tasks that previously required custom orchestration suddenly work with less effort. Systems feel more integrated, and development speed increases.

However, this is where many teams misinterpret what is happening.

What OpenClaw removes from your code, it adds into the system.

The complexity does not disappear. It moves. Instead of being explicit in your application, it becomes implicit inside the runtime.

This is very similar to the trade-off between open source and SaaS. When you adopt a more integrated system, you reduce surface complexity but increase hidden dependency.

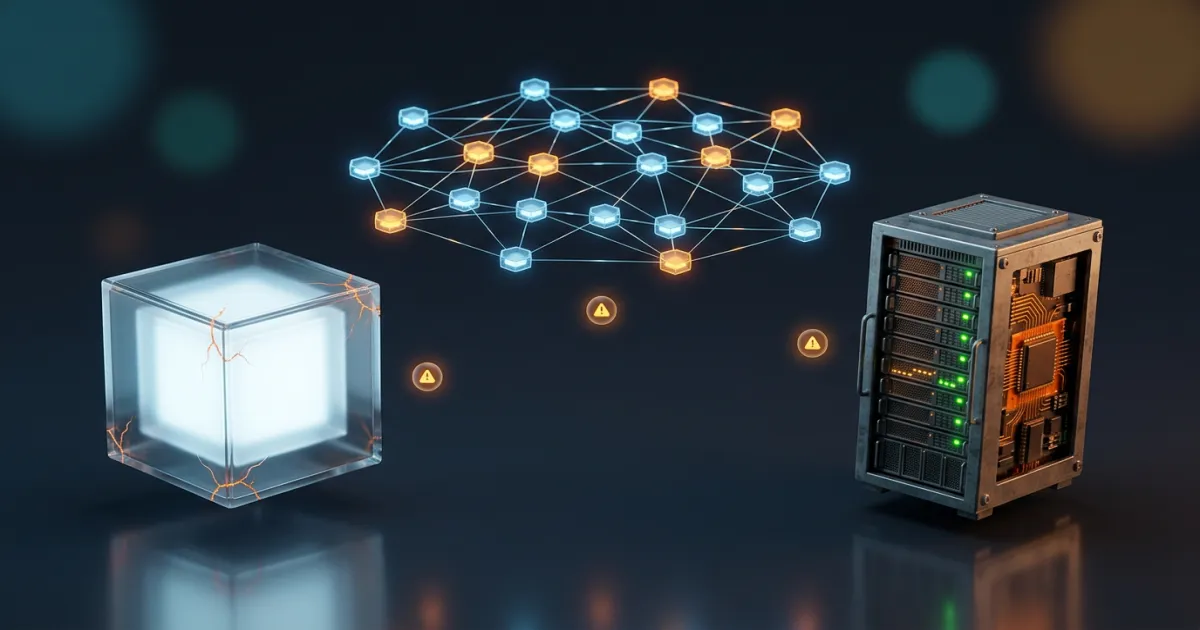

The Trade-Off: Where Complexity Lives

The real difference between APIs, orchestration frameworks, and OpenClaw is not capability. It is where complexity lives.

| Approach | Where Complexity Lives | Strength | Weakness |

|---|---|---|---|

| APIs (OpenAI) | Application layer | Simple, predictable | Limited execution |

| LangChain | Distributed across code | Flexible | Hard to maintain |

| OpenClaw | Inside runtime | Integrated | Hard to debug |

Each approach solves a different problem, but none removes complexity entirely.

Scenario: Same Workflow, Different Systems

Consider a realistic workflow:

“Process input → fetch data → generate response → update system → handle failure”

API-based system

- manual orchestration

- explicit control

- predictable flow

→ easy to reason about → hard to scale complexity

LangChain-style system

- chained logic

- tool integration

- partial abstraction

→ flexible → debugging becomes fragmented

OpenClaw system

- runtime-managed execution

- implicit orchestration

- centralized flow

→ less glue code → more system-level complexity

Where OpenClaw Breaks in Production

OpenClaw solves execution problems, but introduces new challenges that are not obvious at first.

The most common issues are:

Debugging opacity Execution is abstracted, making failures harder to trace

State complexity Multi-step workflows introduce hidden dependencies

Cost amplification More execution steps increase usage, as explained in why AI cost explodes after scale

Infrastructure overhead Running and scaling the system introduces new operational requirements, similar to openai vs self-hosted LLM trade-offs

Security surface Autonomous execution increases risk exposure

The cost aspect becomes especially important when combined with openai vs self-hosted LLM cost, where execution complexity directly impacts infrastructure decisions.

Hidden Complexity Breakdown

| Layer | Problem | Impact |

|---|---|---|

| Execution flow | Hard to trace | Debugging difficulty |

| State handling | Implicit dependencies | Fragility |

| Cost behavior | Multi-step execution | Cost growth |

| Infrastructure | Scaling overhead | Operational cost |

The Real Shift: From Tools to Systems

The biggest change OpenClaw introduces is conceptual. It shifts AI from being a tool into being a system.

Traditional AI usage is transactional. You send a request, receive a response, and move on. OpenClaw introduces persistent workflows, where actions, state, and execution are continuously managed.

This is similar to the transition from simple SaaS tools to full platforms. If you have explored how to compare SaaS tools objectively, you know that the real difference is not features, but system behavior over time.

APIs scale usage. Systems scale complexity.

Connecting Cost, Infrastructure, and Execution

This is where everything connects.

- Cost increases because systems become more complex

- Infrastructure decisions change because systems become heavier

- Execution becomes harder because systems become stateful

If you combine:

- why AI cost explodes after scale

- openai vs self-hosted LLM cost

- and this execution layer

You get a complete picture:

👉 AI systems do not break in one place 👉 they break across layers

When OpenClaw Actually Makes Sense

OpenClaw is powerful, but not universally applicable.

It works best when:

- workflows are multi-step and deeply integrated

- execution matters more than response quality

- system behavior is stable and understood

- team can handle system-level complexity

When It Does Not

OpenClaw is often the wrong choice when:

- use case is simple or single-step

- product is still evolving rapidly

- cost control is critical early

- team lacks infrastructure experience

In these cases, APIs are not a limitation. They are an advantage.

The Mistake Most Teams Make

Most teams do not misunderstand OpenClaw. They misunderstand timing.

They adopt it because it feels powerful, not because the system requires it. This is the same mistake seen in multi-cloud vs single vendor decisions, where complexity is introduced before it is needed.

- Too early → unnecessary complexity

- Too late → missed optimization opportunity

The Real Question

The question is not whether OpenClaw is better than APIs or orchestration frameworks.

The real question is:

Has your system reached the point where execution is the bottleneck?

Because once you introduce an execution layer, you are no longer building a simple AI feature. You are operating a system that behaves more like infrastructure than application code.

AI does not become powerful when it generates better answers. It becomes powerful when it can execute reliably at scale.